NVIDIA has announced detailed specifications for its new 88-core Vera data center CPU, claiming it to be the world’s first processor specifically designed for intelligent agent AI and reinforcement learning.

When processing large-scale data, AI training, and inference, this chip operates twice as efficiently as traditional rack-mount CPUs and is 50% faster. NVIDIA founder and CEO Jensen Huang emphasized that the CPU is no longer just an auxiliary AI model, but has become a core driving force. Vera will help AI systems achieve faster “thinking” and broader scalability.

In terms of core architecture, the Vera data center CPU features 88 custom-designed Olympus cores from NVIDIA. To meet the stringent requirements of concurrent tasks in a multi-tenant AI factory, each core supports spatial multithreading technology, enabling it to stably run two tasks simultaneously.

Furthermore, Vera employs a second-generation low-power memory subsystem. This system is built on LPDDR5X memory, offering bandwidth up to 1.2 TB/s. Compared to general-purpose CPUs, it doubles the bandwidth while significantly reducing power consumption by half.

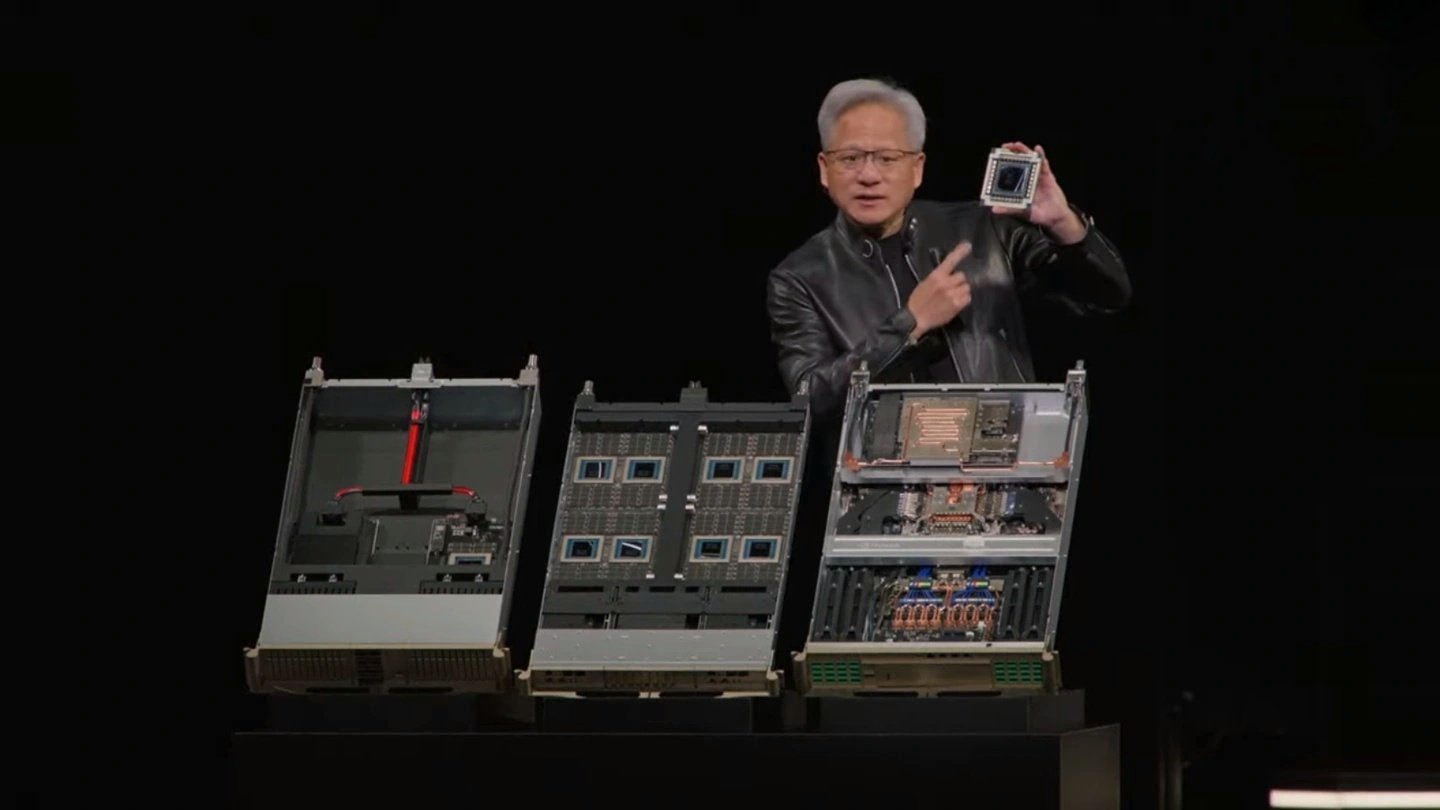

To meet the extreme scalability demands of data centers, NVIDIA simultaneously launched the Vera CPU rack based on the MGX modular architecture. This rack integrates 256 liquid-cooled Vera CPUs, capable of maintaining over 22,500 independent, full-speed concurrent computing environments, more than 45,000 independent threads, and 400TB of ultra-large memory. This not only achieves a 6x increase in CPU throughput but also directly doubles the performance of AI workloads.

At the data transfer level, Vera is deeply paired with the GPU through NVLink-C2C interconnect technology, providing a consistent bandwidth of up to 1.8 TB/s, which is up to 7 times that of PCIe 6.0.

Vera CPUs have entered full-scale mass production and are expected to begin mass deliveries to key customers such as Meta and Oracle in the second half of this year.